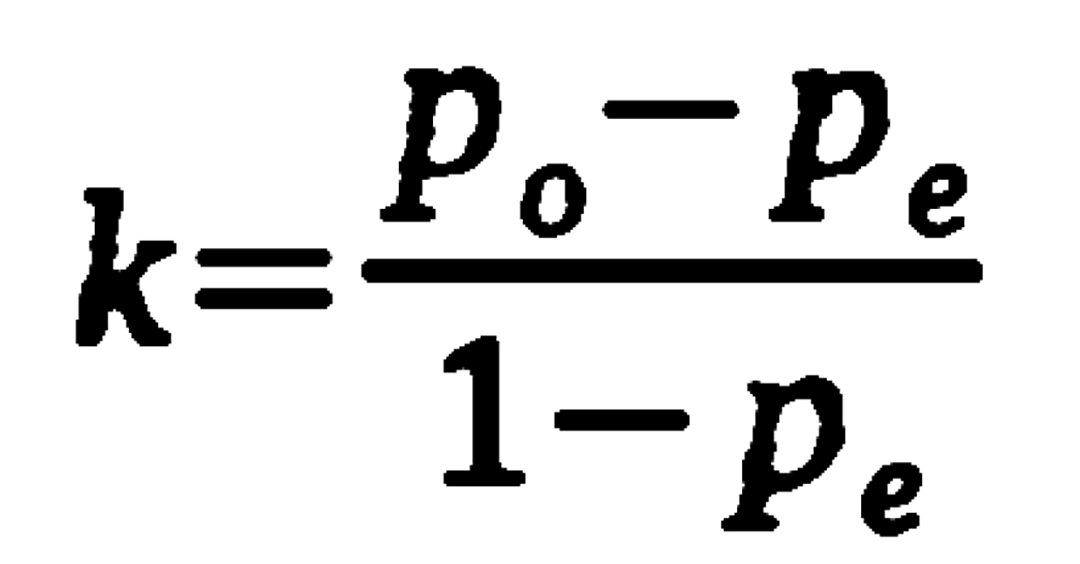

Cohen's Kappa and Fleiss' Kappa— How to Measure the Agreement Between Raters | by Audhi Aprilliant | Medium

Cohen's Kappa and Fleiss' Kappa— How to Measure the Agreement Between Raters | by Audhi Aprilliant | Medium

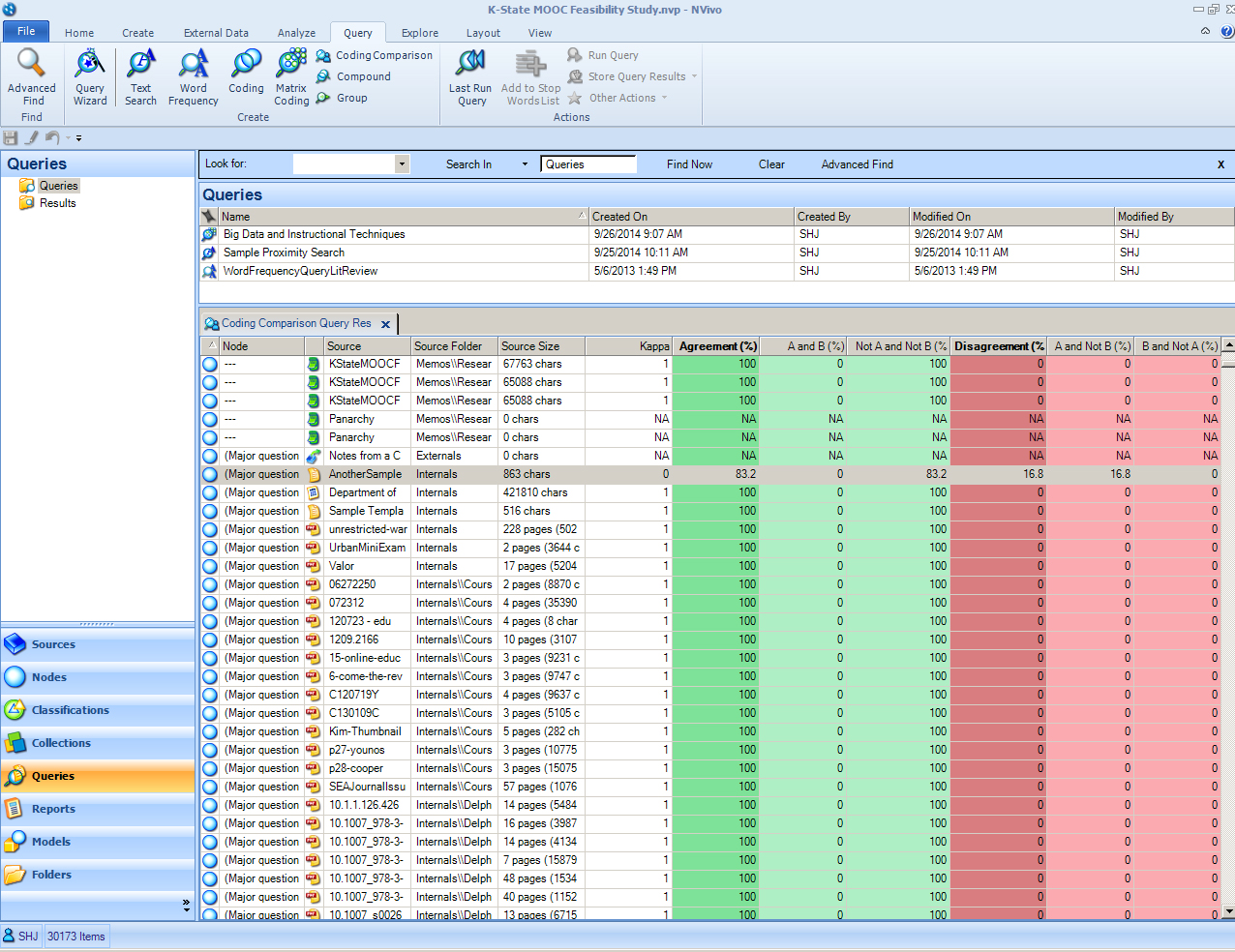

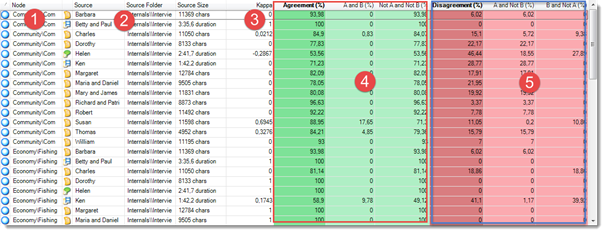

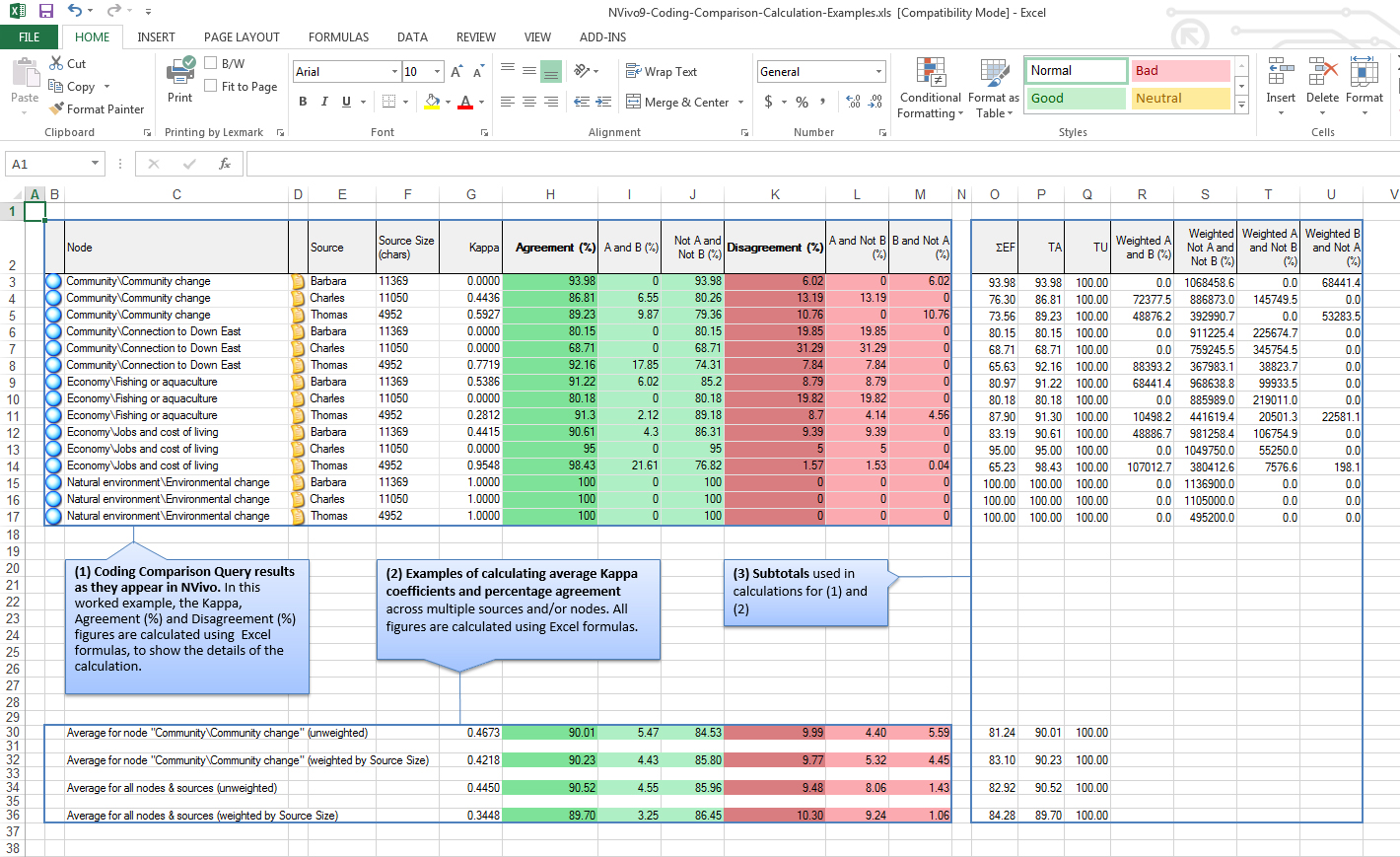

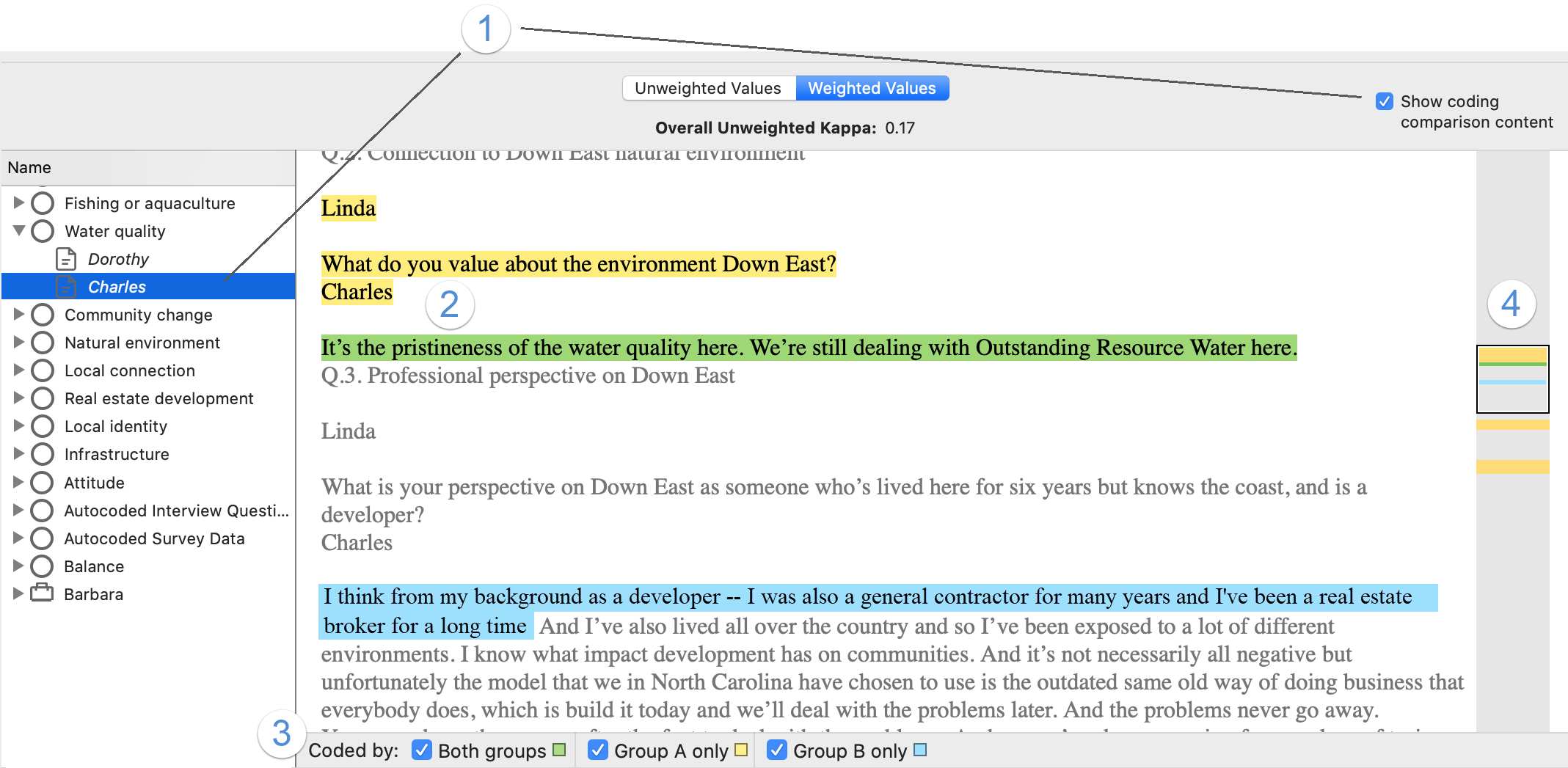

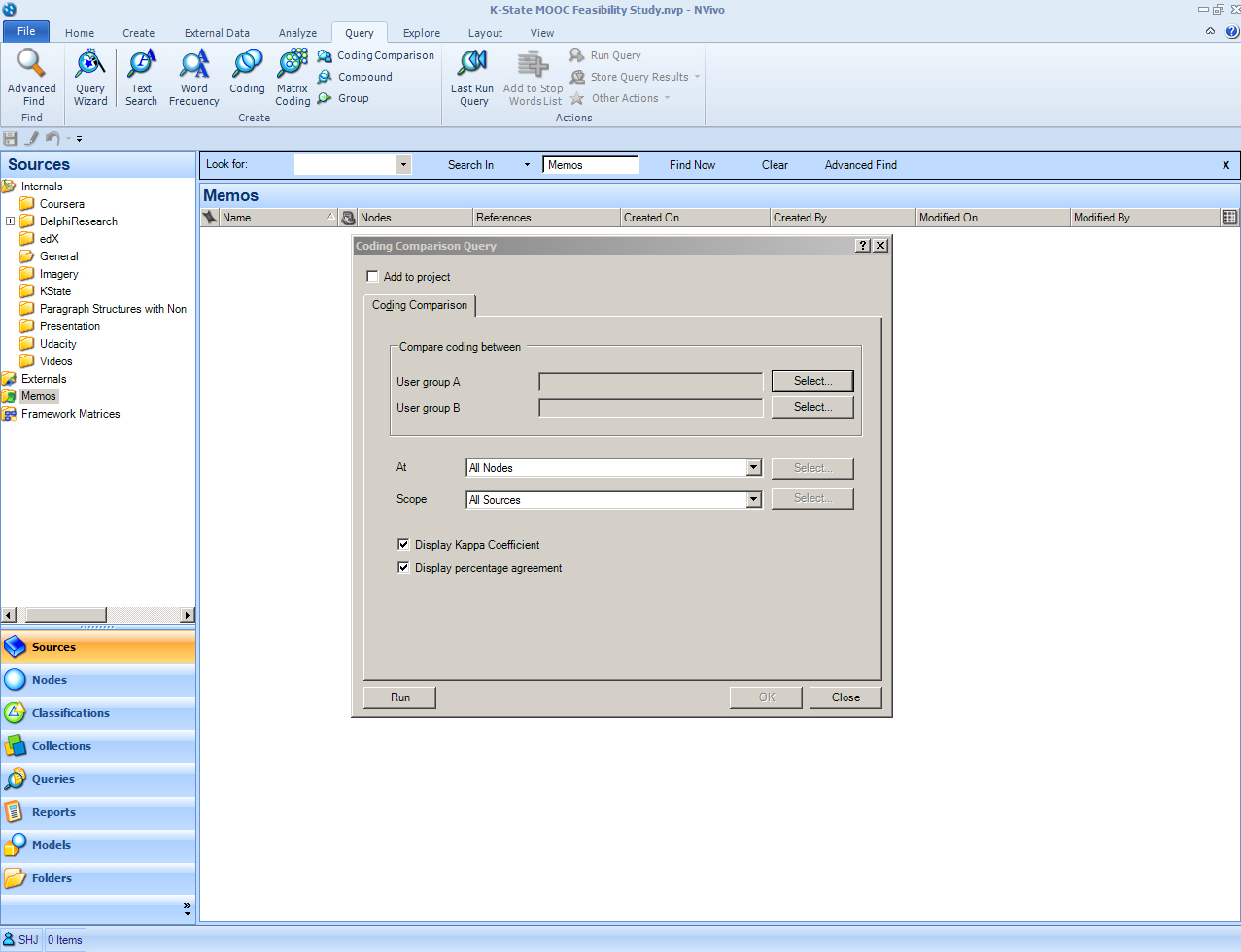

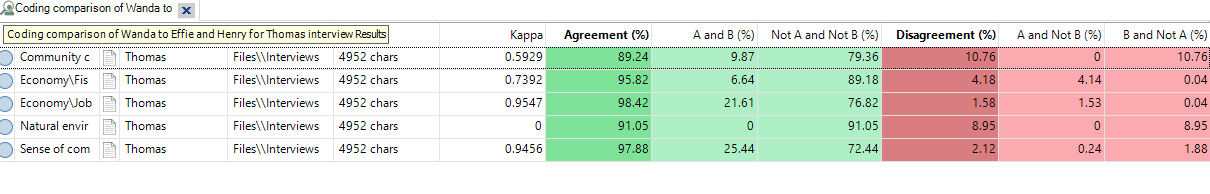

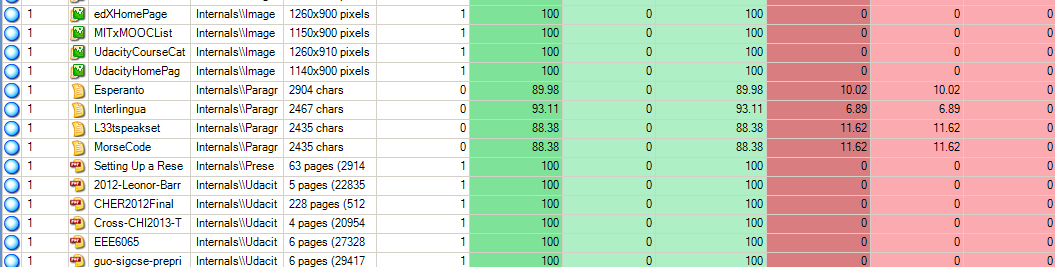

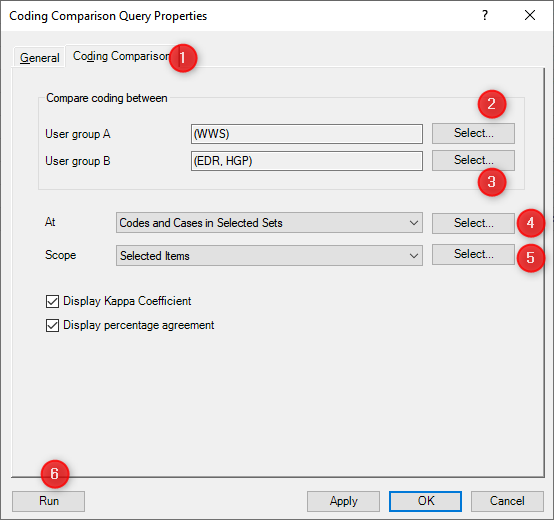

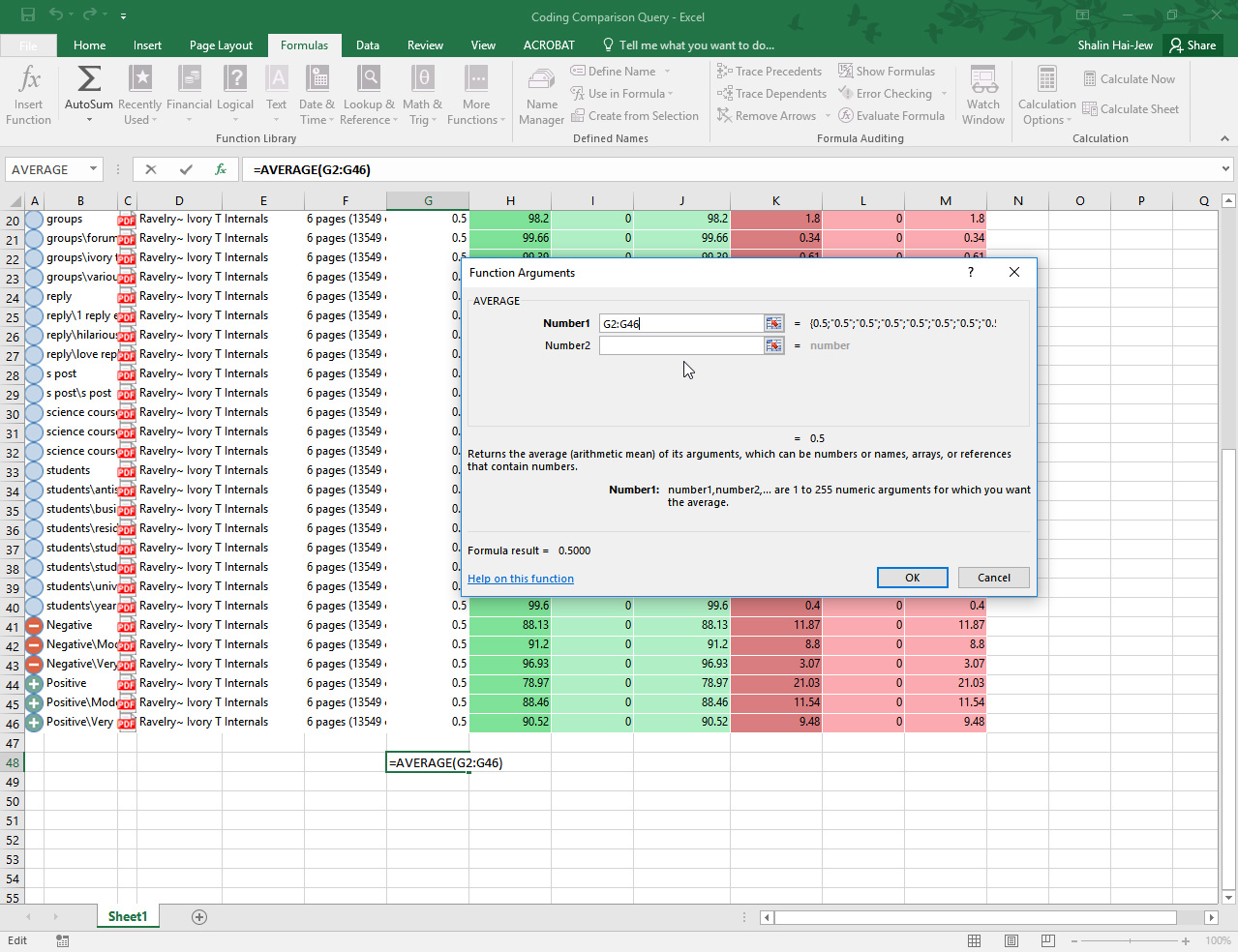

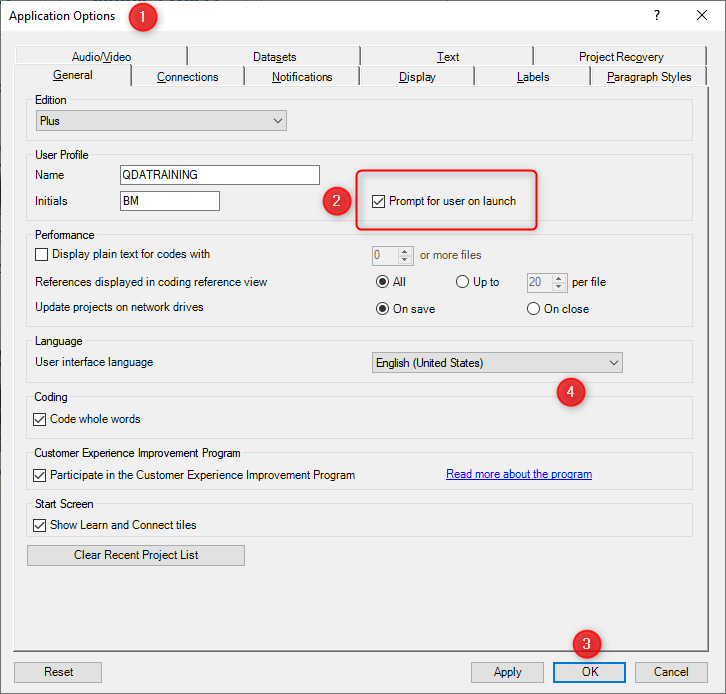

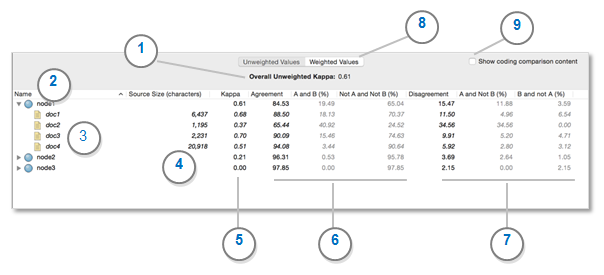

Can anyone explain how to compare coding done by two users to measure the degree of agreement for coding between the users with Nvivo 10?